Embrace the Human to Overcome the AI Capability Absorption Gap

Why we need more focus on learning, thinking and knowledge engineering if we are to make progress with AI-enabled organisational transformation

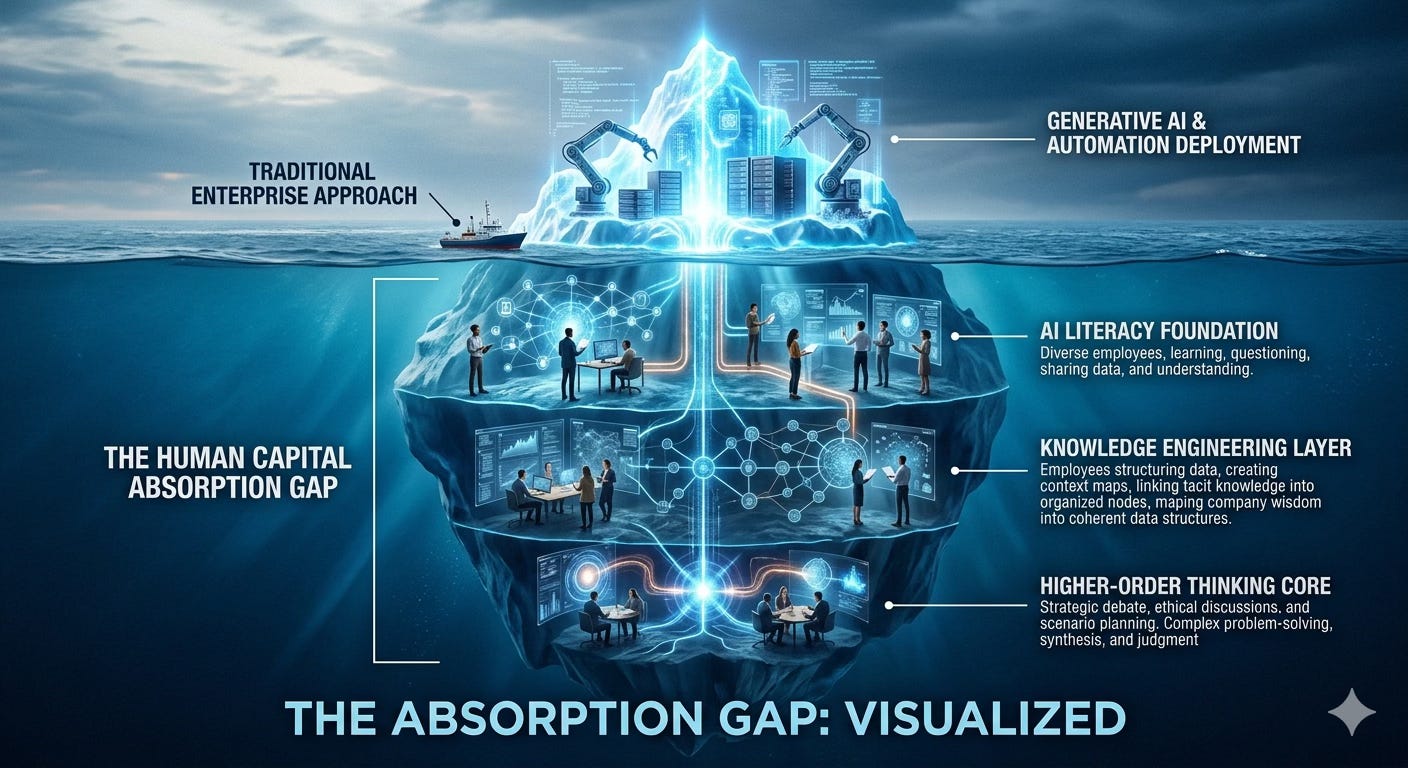

The Capability Absorption Gap in enterprise AI is widening, not narrowing, as model and tool development outstrips the ability of incumbent business leaders to adapt to what it makes possible.

Adoption programmes are not cutting it, and their focus on getting people to use the tools that companies have licensed is too tactical. Instead of adoption we need adaptation, and conventional ‘change management’ is not going to get us there.

We are making tremendous progress in AI tech, but this is currently outstripping the ability of organisations to deploy it.

Models and Agents Roundup

The past couple of weeks have seen the launch of major model updates from OpenAI and Anthropic - GPT-5.5 and Opus 4.7 - and a major new milestone for open models with DeepSeek 4.

Ethan Mollick rates GPT-5.5 highly, and from reviewing OpenAI’s prompting guide for 5.5, it seems to be a powerful model that responds well to sophisticated use cases. And DeepSeek’s new models are an order of magnitude more efficient than leading models from Anthropic and OpenAI.

Google has also recently released all kinds of stuff, from model updates to an enterprise agent platform. For an insight into their strategy, Ben Thompson’s interview with Google Cloud CEO Thomas Kurian is worth a read.

Meanwhile, the much-hyped Mythos model is not as dangerous as we were led to believe. Anthropic’s super-scary bug hunting model Mythos is shaping up to be a nothingburger, according to the Register.

The pattern is clear: capability is compounding rapidly across performance, efficiency, and deployability. The gap is becoming organisational.

Intelligence on Tap, but the Plumbing is Faulty

Model progress is amazing, and there is a lot more going on in the open models world than just DeepSeek, so I remain of the view that most enterprise AI usage could eventually be driven by open and small models locally owned and hosted, supplemented by calls to proprietary models where needed. Both DeepSeek and Apple’s slow-burn edge computing strategy for on-chip AI point to huge potential for more efficient AI computing.

But for now at least, the Capability Absorption Gap in most organisations outside of software engineering means that enterprise AI usage is developing far slower than the technology. And given the pressure on CIOs to demonstrate ROI, many of them could end up relying on heavily compromised but good-enough Frankenstein’s Monster solutions from the main enterprise platform vendors.

Another recent Enterprise AI adoption research report shows companies struggling with adoption:

Despite near-universal belief in AI’s potential, most organizations are struggling to translate adoption into real business value. Executives are facing growing pressure and challenges around AI strategy, productivity expectations, security and governance, and shifting power dynamics.

The 2026 survey findings reveal 79% of organizations face challenges in adopting AI — a double-digit increase from 2025 — with 54% of C-suite executives admitting that adopting AI is tearing their company apart. This is despite the fact that 59% of companies are investing over $1 million annually in AI technology.

If we continue down the incremental change management route and target marginal productivity gains from applying AI to existing ways of working, then we could end up with slightly leaner versions of last generation organisational systems, rather than better organisations overall.

That’s what happened with the initial electrification of factories from the 1880s onwards, and it took until the 1920s for major productivity gains to arrive, once factory owners had re-designed their work systems.

Technology diffusion is hard enough, but the re-tooling or re-design of organisational operating systems to really take advantage of AI is a big challenge for leaders whose whole careers have been shaped by navigating a bureaucracy.

Even the question of what this means for jobs is hard to answer at this liminal moment. Hiring and firing in response to AI is all over the place, which prompted the Financial Times to declare recently that the jobpocalypse narrative has been over-done:

“Can AI do this task?” is a useful starting point for thinking about how it might impact employment, but it is an ambiguous signal that forms only one part of a large and complex picture. Considering the other factors that can shape job growth, directly or indirectly, helps to explain why thus far those occupations that are most exposed to AI are as likely to have grown as to have shrunk.

Knowledge Engineering is Key to AI Readiness

One reason for the gap is a focus on targeting tool use and basic adoption rather than cultivating AI readiness in areas such as knowledge engineering.

Although the major consultancies could be rendered obsolete by AI in their current form, that is not stopping them from treating AI adoption like one last opportunity for body shopping, powerpoint strategising and building dependence on external advisors.

If you write a manifesto about AI transformation and never once use the word “knowledge”… you might be missing what AI actually runs on.

We have written a lot about the advantage that wiki-based firms have over PPT-based firms in terms of their work being legible and learnable. The more you write things down and structure your knowledge, the better your AI agents will perform.

But that should not mean that companies seek to suck all the knowledge out of human workforces to codify it and then dispense with their services. Knowledge doesn’t really work like that. It is social, connected, often ambiguous and implicit rather than explicit.

Interestingly, some firms are buying up the conversational and collaborative exhaust of defunct start-ups to train AI models, which feels slightly ghoulish. Other consultants are starting to talk about AI-enabled knowledge transfer, such as this report by KM practitioner Ross Dawson and colleagues at Humans+AI.

I suspect there are many ways in which AI can help people collate, organise and share their knowledge that are more protective of the human element than just extraction and codification. It will be interesting see how this develops.

Building Strategic Thinking Muscle

But we also need to go beyond basic knowledge engineering.

We are working a lot on the challenge of cultivating AI literacy for large firms in a way that brings together all layers of a large organisation and all knowledge levels in a common narrative of world-building.

Literacy is such an evocative term as it touches language (both human and computational), world knowledge, clarity of thought and expression, as well as other areas of cognition and experience.

If our ambition is not just to adopt new tools to speed up the old manual process structures, but rather to adapt the organisation to what AI makes possible, then we need people to expand their literacy and their thinking in various ways, and not just learn how to operate a chatbot.

A valid critique of the messy liminal phase AI has reached in the workplace is that many of us are suffering from software brain - the idea that everything we do can be captured in a database and organised or automated.

Nilay Patel, Editor-in-Chief of The Verge shared a widely discussed polemic last week arguing that most people - and a significant number of younger people - do not want to be flattened and automated away by AI, riffing on this idea of software brain:

For everyone else, AI is just a demanding slop monster. It’s a threat. I’m not saying regular people don’t use Excel or Airtable to plan their weddings or have fun throwing PowerPoint parties, or even that AI won’t be useful to regular people over time. I think a lot of people enjoy data and tracking different parts of their lives. I’m wearing a Whoop band as I write this. I’m just saying these things aren’t everything. Not everything about our lives can be measured and automated and optimized, and it shouldn’t be.

It’s a critique worth engaging with, and I believe there is a big enough landing zone for pro-human AI-enhanced organisations somewhere between automated workhouses and artisanal candle shops that we can aim for.

But if we want people to help build the new, rather than keep poking around in the old systems, then we need to cultivate their literacy and learning more effectively than we have done to date.

Brandan McCord recently shared a great introduction to the concept of Bildung and the role it played in transforming the Prussian education system after their defeat by Napoleon that is relevant to the adoption of AI:

With AI, we are building something like self-guided machines. Whether these systems liberate or merely displace is not settled. But the possibility of leisure at scale is real enough to become a serious question.

If AI can compress parts of instruction, it may deepen learning where it is used and clear ground for formation where it gives time back. But only if it preserves productive struggle rather than bypassing it.

The alternative is already visible: autocomplete for life. Not just help with expression, but the slow outsourcing of judgment itself. That is Bildung’s antithesis.

Another good read in this general direction is Neil Perkin’s recent newsletter on the need for an AI philosophy and not just an AI strategy.

It might sometimes feel like building better organisations is too hard, but there are plenty of examples around us of either a visionary leader or a compelling burning platform (or both!) creating the conditions where rapid evolution against the odds can produce winning systems.

Azeem Azhar shared a thought-provoking analysis from his team a few days ago about how Ukraine was forced to innovate in defence in order to survive, and it really shows what is possible when people have ownership, agency and very open and rapid feedback loops between producers and users. People can do anything with the right motivation.

If organisations respond to AI by forcing people to become more legible to machines, they will fail, socially, culturally, and ultimately economically.

But if they invest in human capability - judgment, learning, knowledge-sharing, and strategic thinking - they have a chance to build something far more powerful: organisations that are not just more efficient, but more adaptive, more coherent, and more human.

The Capability Absorption Gap will not be closed by a better toolset, but by better organisations.

It may be quicker and more effective to build net new capabilities and functions, rather than deploying AI in support of old ways of working, as factories initially tried with their electrification around the turn of the 20th Century.

‘Change’ is a very messy, human challenge; but there might be ways in which agentic AI can help us involve everybody and guide a distributed approach, helping track progress, and making the organisation more legible in real time. This is something we are exploring, so we might return to this topic soon.

The gap isn't primarily organisational readiness—the technology itself has to be substantially reworked to fit the specific productive context. Rosenberg's Inside the Black Box makes this the central point: the black box isn't transferable as-is, and the learning, adaptation, and complementary innovations required are so extensive that 'adoption' fundamentally mischaracterises what's happening.

Your electrification parallel actually illustrates this. The 1920s productivity gains weren't delayed by slow organisational learning—they waited on the development of safety practices, residual value tables, and the other infrastructure required for electric power transmission to work inside a factory rather than just delivering power to its perimeter. (I explored this in https://thepuzzleanditspieces.substack.com/p/the-failure-data-economy)

The same logic applies to GenAI. The current tooling is built around a generic conception of knowledge work. Getting real productive value means redeveloping them around specific work structures—which presupposes you understand those work structures first. The more fundamental question isn't whether people are ready for the tools, but whether the tools are being aimed at the right work in the first place.