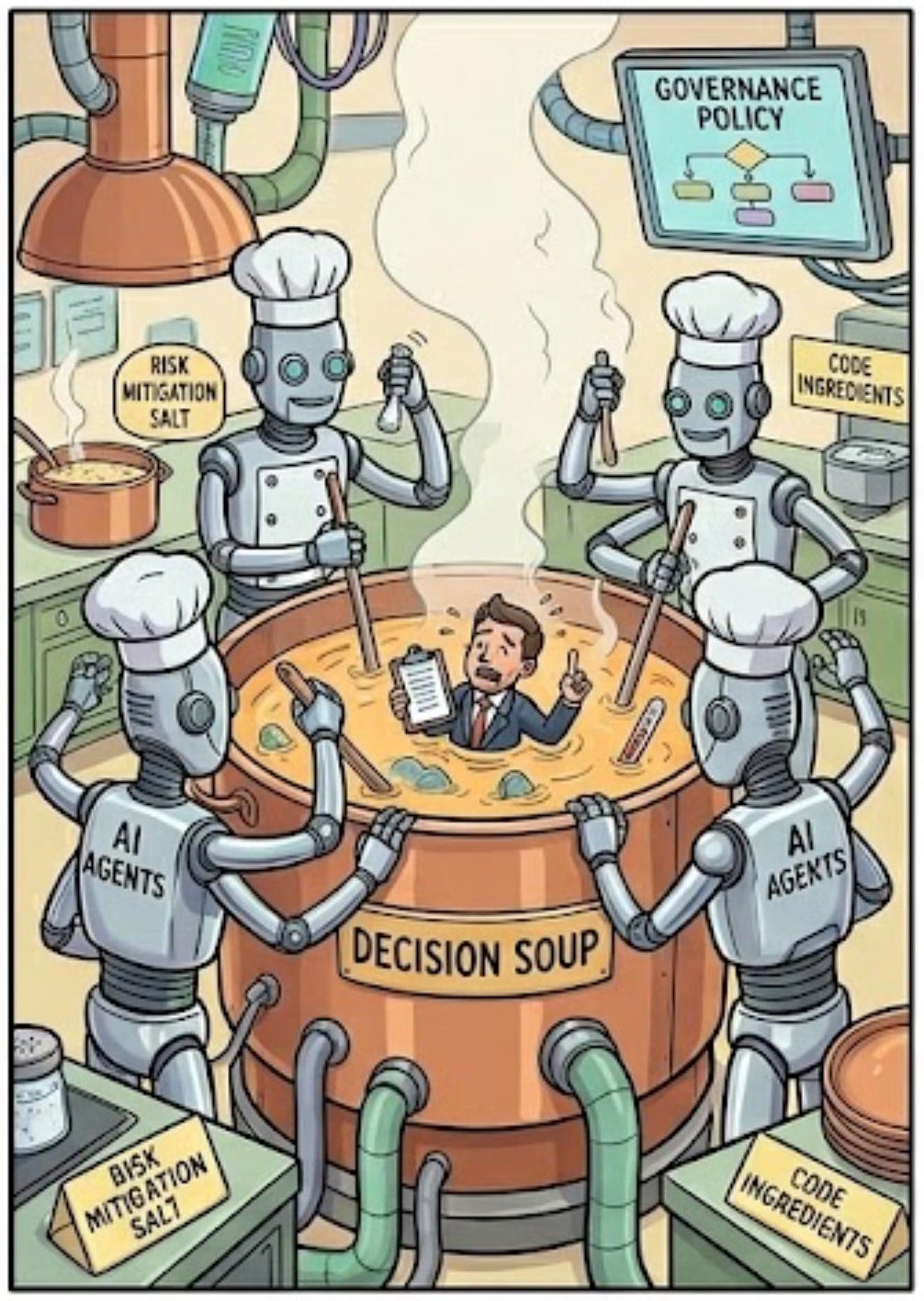

Humans in the Loop or in the Soup?

How can we create governance systems that are quick and detailed enough for the AI era, but also maintain human-in-the-loop safeguards and accountability?

Enterprise AI governance, security and safety are challenges that will require a multi-domain approach and imaginative solutions that combine technology, human factors, knowledge engineering and codification. These are issues that cannot just be delegated to CSOs and IT functions without collective leadership accountability.

Waiter! There’s a Human in my Loop!

The recent furore over the USA Department of War’s threats to declare Anthropic a supply chain risk is an interesting example of how confusing things are likely to become.

Anthropic has been supplying a modified (and apparently more advanced) version of Claude to the US military through Palantir, but has tried to insist on two red lines governing its usage, namely (1) that it should not be used for broad spectrum domestic surveillance, which might be technically illegal; and, (2) it should not be used to run fully-autonomous weapons systems, because Anthropic do not believe it is yet reliable enough to do this safely.

In fact, as Henry Farrell wrote today, the US military is such a vast bureaucracy that the majority of use cases for LLMs will not be about autonomous weapon systems, but the logistics, information synthesis and practical management tasks that such a huge organisation requires.

Nevertheless, the Department of War has insisted it should have total control over how the tool is used, and if Anthropic do not acquiesce, then the company will be declared a supply chain risk, meaning not only that it will lose its contracts with the US government, but also that third parties offering services to government that depend on Anthropic’s technology will probably need to replace it for another model.

By way of context, Israel - one of the most advanced users of AI-enabled technology for targeting and hardly what might be called over-cautious - claims to use a double human-in-the-loop sign-off process to verify proposed targeted attacks. Meanwhile, the US “Secretary of War” rails against “stupid rules of engagement” and “traditional allies who wring their hands and clutch their pearls, hemming and hawing about the use of force.”

Inevitably in such a polarised political landscape, Anthropic have been seen as the good guys and OpenAI, who negotiated their own agreement with the DoW shortly after Anthropic’s deal collapsed, have been seen as the bad guys; but what this means for AI governance is likely to be less simple than it appears.

So where is AI governance headed and what are the practical alternatives to unlimited executive power?

Security Soup and Programmable Governance

I had a conversation with a very smart and accomplished CISO last week, and she suggested that we are heading to a place where human oversight is insufficient to maintain security in a complex enterprise. So, whilst we might want to see human-in-the-loop solutions to minimise AI risks, we probably need to think more imaginatively about how this works.

Historically, regulation and corporate governance involved a very slow process of risk analysis and political negotiation of the rules, which were then handed down to company officers to enforce manually through training, guidelines and so on. But this approach was prone to problems such as regulatory capture, or compliance theatre, where those in charge of upholding the rules lacked the power and influence to rein in the behaviour of colleagues who were generating profits from skirting them (e.g. in banking). This was also prone to regulatory inertia, which often meant regulators were too busy fighting the last war and struggled to keep up with current or emerging risks.

So how should companies do to ensure security, safety and risk mitigation in their use of AI and related technologies?

Diginomica recently reported on a UK CIO event where executives expressed a desire to ensure AI safety without harming innovation, and several attendees talked more about a collaborative evolution than top-down transformation:

Anybody in a complex organization should think about whether they are enabling or leading. You enable through a coalition of the willing.

There have been several attempts to scope out some guidelines for trustworthy AI. For example, James Miller at TechTarget recently shared some thoughts on how to boost institutional accountability to align governance and structure, and laid out a framework to guide the shift from strategy to execution via trustworthy data and algorithms.

Elsewhere, those who want to see pro-human, safe and trustworthy AI are writing lots of guidelines, pleas and commendable nice words. But they often lack a credible, practical approach to implementation that is sufficiently robust, responsive and integrated with technology systems and platforms to really make a difference.

As one enterprise AI practitioner put it when reflecting on lessons learned:

Policy alone won’t save you. You need policy and technology and education working together. Any one of those by itself is insufficient.

Looking ahead, I think we are headed towards AI-enabled programmable governance (building on existing practices such as Policy-as-Code) where an organisation ingests and codifies regulatory, compliance and legal rulesets, and combines them with its own rules of the road to give governance systems the context needed to guide the organisation’s work. And of course, governance isn’t just about rules like “don’t leak data.” It also includes less technical concerns like “don’t violate brand values,” “don’t hallucinate pricing,” and “stay within budget.”

Where Leaders Need to Step up

The challenge in building and evolving fit-for-purpose AI security and governance systems is not to be under-estimated, and it covers everything from tech, data, and platforms to culture, behaviour, and process management.

Manual oversight is not enough. Human-in-the-loop is desirable, but at what level and supported by what kind of deep infrastructure underneath? Perhaps we will end up with agents surveilling other agents, and an organisational autonomic immune system that draws lessons from biology with single-purpose nano-bots running around in swarms to identify and contain anomalies or ‘foreign bodies’ at the network level.

But however our security, safety and governance systems evolve, they will need significantly greater codification of our rules, guidelines and threat vector identification than we have today. This is an area where the wider leadership function can meaningfully assist CISOs and CIOs without needing a great deal of technical knowledge: start by capturing specific rules or statements that make nice words actionable and (ideally) programmable.

It is a system challenge, but we have barely scratched the surface of writing the code for it.

We already know that when leaders only decide and delegate on AI topics, but don’t lead adoption, the outcome is sub-optimal. We need them to get their hands dirty and bring their experience and knowledge of their organisation’s value chain and strategy to the table. We already know that mandating adoption using the crudest possible KPIs is likely to optimise for the wrong outcomes.

We need leaders to lead the codification or knowledge engineering required to make our guidelines understandable to agents, APIs and systems.

This starts with understanding and connecting the knowledge objects of the organisation in shared knowledge graphs, so that the entities we work with (person, team, process, system, concept, goal, etc) are addressable and findable. Once we have a good basic knowledge graph, then we can start to make a system that is programmable (IF this THEN that, etc) - and that is where we can start to develop meaningful codification of how we want things to work.

Neil Perkins recently shared a thoughtful article on knowledge engineering and why it matters, which is worth a few minutes to read:

… I think the idea of architecting knowledge for AI goes far deeper than just a technical practice. The quality of every AI-assisted decision, recommendation, and output is bounded by the quality of context it receives. This makes the curation of organisational knowledge (what gets captured, how it’s structured, how relationships between ideas are maintained) a fundamental strategic capability.

Humans in the Loop or Making the Soup? Why not Both?

To suggest that we are blowing past the point where humans in the loop can meaningfully oversee and manage safety, security and regulatory compliance need not be a defeatist or alarmist position. It is about the size and scale of the loops, and where people can maximise the value of their unique intuition and experience.

If the loops are too low-level, there is too much information to process unless we develop the kind of pre-cognition powers in a movie like Minority Report. If the loops are too high-level, then we review our leadership team’s nicely packaged monthly threats powerpoint file only to find we were fatally compromised 29 days ago.

If we think of enterprise AI as an exoskeleton that empowers people rather than a robot that replaces them, perhaps we can use agentic systems where they can realistically perform autonomic immune functions, whilst surfacing issues and decision points at the right level of detail for human-in-the-loop issues, allowing people to drill down quickly to see the specifics. But this will require a whole load of systems, data and knowledge graphs to support them.

Leading and participating in the codification effort is something all leaders can do today to take the pressure of CSOs, CISOs and CIOs who cannot do it alone.

I use the terms in/on/off the loop as an easy way into discussing key concepts about oversight. However, there is a tendancy to see 'in the loop' as always the best and safest option, and as you point out, there is a complex structure that sits underneath any AI system and in the loop decisionpoint. Declaring something 'in the loop' masks the complexity, but too often I see it as the default position without really understanding what it means in practice.

Your CISO contact is right — human oversight alone can't keep pace. But the answer isn't removing humans from the loop. It's building infrastructure where the loop is structural rather than procedural.

The current model: slow regulation → policy documents → manual compliance → compliance theatre. Your post names exactly why this fails. Regulatory capture, inertia, insufficient power to enforce.

The alternative: governance as architecture. Every agent action is a signed event on a hash-chained causal graph. Authority scopes are checked before execution, not audited after the fact. Values conflicts halt the system and escalate to humans automatically. The human stays in the loop for the decisions that matter — values, identity, existence — while routine operations run with structural accountability built into the data layer.

Not "policy and technology and education working together." The policy IS the technology. Encoded as constraints, not documents. Verifiable by walking the chain, not by trusting the compliance team.

I've been building this — 38 posts on the architecture at mattsearles2.substack.com. The latest walks through cross-domain accountability chains where a single event traces through four governance domains on one hash chain.